February & June 2023

Disproportionately featuring Crown Tower.

by Mary

End of year reflections, for the 10th time.

Not getting Taylor Swift tickets. There was an entire shared national experience of staring at a lightweight HTML page on the Ticketek website that reloaded every few minutes; not counting the folks who did get tickets who you therefore could argue aren’t really Australian anymore. I didn’t even see the screen of anyone who did get tickets, but I do know a few people who got the VIP tickets in earlier and pricier sales. Those are committed people; one also flew to LA to see a concert there, and another bought VIP tickets to every Australian show. National loyalty isn’t their key loyalty.

About an hour after the main sale wrapped up, I got a phone call to say a family member was in hospital and I seriously wondered if somehow Taylor Swift had done it. (For the record: no, and also the family member is better.)

Sitting in a friend’s bathtub at a party. My family went to a friend’s birthday straight from the beach and she offered us a shower. I fell very hard and very suddenly, landing hard but neatly into a bathing position, and spent a few horrible minutes contemplating my mortality. My daughter and I shouted for help but weren’t heard or understood, so we collected ourselves slowly, dressed, and went back to the party.

Keeping my job. My son’s birthday, which every four years falls across the US presidential inauguration — on his seventh birthday we marked his progress in reading by his asking why someone had written “Trump” in the sky — was instead ushered in this year by my employer laying off 6000 US employees abruptly at about 9pm the night before in local time (early in the morning, US time). We talked about it into the midnight of his birthday, “we’ll just have to see, we’ll just have to see.”

I found out about the Australian layoffs some weeks later, on a bus, via a message from a very senior manager trying to confirm the warnings had gone out. You tell me!

I was not laid off, one of my former bosses, and at least one of my former employees, were.

New Year’s at Infinity. Every third or fourth New Years we decide to join some kind of Sydney festivity for it. So a year ago Andrew and I went to Infinity, the rotating restaurant in Sydney Tower. I don’t, at this point, remember anything about the food, although it was fine. I remember that the table next to us was a Canadian couple; the man determined to have a terrible time come what may. They pause the rotation of the floor for the fireworks displays, so unless you are lucky you have to walk around the restaurant to see them. Our neighbour was specifically convinced that his table had been punitively chosen to have no view of the fireworks, and further believed that Chinese patrons had bribed the staff to get the view. And explained this all at length to both his wife and to whichever senior member of the staff drew the short straw that night.

The service was kind of slow and two courses were served this year, after midnight. They kept trying to comp us New Year’s wine, we weren’t sure whether it was for the slow service, the terrible table nearby, or just that they had a lot of leftover wine.

Watermelon and goat’s cheese salad. My mother and her cousins had a family reunion at the Marsden Brewhouse and I ordered this thinking it sounded like a light lunch of, say, cubes of watermelon tossed with crumbled goat’s cheese.

It was actually a rectangular slab of watermelon, like a piece of toast if it came from the world’s largest loaf and was cut three times as thick as toast, with an entire layer of goat’s cheese slathered over the top like peanut butter. No expenses spared level of goat’s cheese.

Quite good honestly, but unexpected.

Management dinner at Kid Kyoto. A few representatives of my management chain were in Sydney in early December and we had dinner at Kid Kyoto. Someone took a bet on ordering a large bottle of sake despite the denials of the table that we would drink that much sake. Again with the watermelon; this time watermelon sashimi. More thinly sliced than at the Marsden Brewhouse.

Watching sunset reflect off Crown Towers. On the one hand, Crown Towers is evil; it took several years for it to be clean enough to begin to operate as a casino. And I don’t love the shape of it either (it’s known as Packer‘s Pecker for a reason).

But it’s all glass, and at sunset it begins by catching fire from top to bottom, and ends all black except for bright orange flame at the top. Someone paid enough money to bottle Sydney Harbour in that building, and Andrew and I paid enough money to watch it burn from the lower floors of the Sofitel mid-year.

Hanging out with my kids in bed. They’ve very large now, and they like to show up in our bed a few times a week to cuddle me, tease each other, and really disturb the peace.

Snorkeling Byron Bay. We dived Julian Rocks in the morning, which was less fun because I hate diving shallower than 5 metres since buoyancy is a pain. We then went back out to snorkel it with the kids in the afternoon, having perhaps optimistically represented the younger one’s swimming ability to the dive shop. But the snorkelling was in the lee of the rocks, and led by a freediver who made it her business to go down and point out the turtles. My younger child refused to be towed and we all swam out over the sharks together.

The death toll in Gaza will pass 10,000 children alone, early in 2024.

The Indigenous Voice referendum failed. Since this was thought to be doomed weeks and months in advance — referenda rarely pass in Australia without the support of the Opposition, and it was polling poorly — I think there was considerable government appetite to quietly move on, and so it did, right into the NZYQ v Minister for Immigration pit. A thoroughly ignoble and unoptimistic year in Australian race politics. What now?

The trial of Sam Bankman-Fried served as a rare moment when the villain was pretty obvious and the system recognised such.

Wind from the south. 2022 was a year without Sydney’s southerly busters but with the return of more normal summer weather, so too the southerly returned in 2023 and with it the 5 seconds of relief between opening the humid still house to the cool wind and closing it up again because the wind is now a gale.

Bruises on my wrists from dumbbells. I’ve been taking weights classes for about a year and a half, which normally use dumbells because it’s quicker to set them up than it is to set up a bar. But I now deadlift into a weight range where if I don’t balance the dumbbells exactly right in my hands, they lean onto my wrists and leave bruises.

Pain in my right heel. I’m more than 12 months into a bout of plantar fasciitis, which largely killed my long walks, one of my best stress relief activities. It ranges from “have to steel myself to get out of bed” through to “niggles after walking several kilometres”, largely depending on how recently I have embraced my fated destiny to spend the rest of my life in shoes mostly made out of foam.

Family funerals in my family tend to fall on the bluest and clearest of days, and my mother’s uncle’s was no different. His widow, my grandmother’s last living sister, sat crying in the front row, when she had enough energy to be awake at all.

Almost everyone I know has left the California Bay Area. It’s still my main business travel destination, but no more Muir Wood magic or rainy weekends in Sonoma. I went to the Bay Area only briefly this year, and it’s the first time I’ve ever been to California but not San Francisco.

My son can’t catch a break. First year in three without a major ear surgery (“I was right up near his brain!” in the post surgical briefing) involved a bad bout of influenza, another bad bout of some other respiratory thing that a RAT couldn’t help us with, a concussion from a schoolmate, and a knee dislocation, all up not far off an entire missed school term.

Eclipse. We’ll see the eclipse of April 8 from Ontario and then do an as yet poorly planned roadtrip to New York City over the course of 3 weeks. The flights are booked, at least.

One million cheer meets and cricket games. Much like in 2023. And 2022. And so it is to be in 2024. It emerges, much to everyone’s surprise, that we are not the same people as our children, and they are going to spend their childhood perfecting back handsprings and cut shots, and probably not any dialect of the BASIC programming language.

Stand-up paddleboarding. Not as a regular thing, it’s just that my children enjoy it enough that we occasionally plan an outing around it. Again with the schism between generations; I am more of a kayak person.

Changing house. Either moving or beginning some renovations. How very Sydney.

Photo backlog. I’d really like to do this. Just like I would have liked to do it this past year. But I’d still really like to do it.

Some form of music. This is even worse than the photo backlog, I’ve been claiming to want to do some form of music or dance lessons for 10 years now. But if my daughter can do two dance troupes and two cheer teams, surely I can do one choir or something. That’s the dream.

I’ll add one bonus. I’m just going to say it, from my lips to the universe’s ears: I want a supernova visible to the naked eye in 2024.

I like to share my giving in order to give folks who don’t know where to give, or who might be inspired to do their own research and start giving, even if not to the same organisations.

I have three of tiers of 2023 recurring giving. The largest level is organisations I have a longer history of giving to and more insight into their strategy. The next level is newer causes I am interested in or exploring. The final one is a “hat tip” level of donations supporting not-for-profit technology organisations whose technology I use a lot.

The Haymarket Foundation is a secular organisation focussed on people experiencing homelessness and disadvantage in Sydney. They specialise in complex homelessness (eg, people with mental health, drug use, or trauma), and we particularly value that they are trans-inclusive. My family has supported them in the past (eg at the start of the pandemic, and as their winter 2022 matching donors).

The National Justice Project, working for systemic change for asylum seekers and refugees, Indigenous people, and detainees, often through strategic legal action. They have advocated for offshore refugee detainees medical case (eg DCQ18’s pregnancy termination, child DWD18’s care for self-harm) and for an inquest into police inaction preceeding the murder of Indigenous baby Charlie Mullaley (if anyone has read Jess Hill’s See What You Made Me Do, Charlie’s terrible death is one of the central stories you may remember).

GiveDirectly Refugees which gives unconditional cash transfers to long-term refugees in Uganda. Unconditional cash transfers give people in poverty the power to decide what they need to spend money on in order to address their own situation. Australian donors can donate via Effective Altruism Australia to receive a tax deduction.

Note: I am not an uncritical supporter of Effective Altruism, see eg How effective altruism let Sam Bankman-Fried happen, Against longtermism, Effective altruism and disability rights are incompatible. I do specifically like the case for unconditional cash transfers.

Aboriginal Legal Service NSW/ACT: Aboriginal-led justice advocacy and legal representation.

Original Power, building self-determination in Aboriginal and Torres Strait Islander communities, via a Giving Green recommendation.

Farmers for Climate Action, influencing Australia to adopt strong economy-wide climate policies with opportunity for farmers and farming communities, via a Giving Green recommendation.

Purple House / Western Desert Nganampa Walytja Palyantjaku Tjutaku Aboriginal Corporation. Late stage kidney disease is endemic in remote Australia. Purple House runs 18 remote dialysis clinics enabling people to get back to their country and family. It also offers respite and support while dialysing in Alice Springs or Darwin.

Internet Security Research Group, who fund Let’s Encrypt. Let’s Encrypt’s free HTTPS certificates are securing the very page you are viewing right now.

Signal Foundation, who fund Signal messaging, I use Signal for secure communication with many friends and communities.

You may also be interested in the organisations my family supported at the start of the pandemic.

Not dozing in the sun in Munich airport. We flew from Sydney to Rīga via Singapore and Munich, and both transits were terrible, Singapore because it was the middle of the night and Munich because we wanted it to be and it very much wasn’t. But Munich airport has plastic lounge chairs in the airport and we lounged on them in bright summer sun pouring in through huge windows, allegedly fixing our body clocks, or at least, being awake.

Wandering in a daze of pain through Muir Woods. I went to Muir Woods twice this year, on both trips to the Bay Area. The second time I had some kind of digestive upset and ended up in a slightly altered state of consciousness, torn between pain and the somewhat meditiative experience that is inherent to Muir Woods.

Carrying a couch up our street in breaks between rain. We rented office space (former therapy rooms, in fact) near our home in 2021 in order to have a place to work that wasn’t also doubling as a school and 24/7 nuclear family circle of hell. I found it hard to let go of the lease before winter was over since it was difficult to imagine a winter without society closing down.

And then I tore my MCL skiing — the opposite of that kind of winter — and it was hard to give up the lease because I couldn’t help move the furniture out of it. Finally, with the historic spring rains, it was time, and the couch was the last thing out, moved to our porch for a freebie pickup just ahead of a forecast downpour.

Georgian food at Alaverdi, gruzīnu restorāns, in Rīga. Mostly I remember cheese dipped in honey, and wine, and the fact that it was 10pm and not yet sunset.

Smoked pork on rye bread, bread pudding, pickled cabbage various colours, honey vodka among other parts of our food tour of the Rīga Central Market. It started inauspiciously with the guide being (only a little late), but then she was thrilled to meet Australians (“they let you out!” “people from Australia and New Zealand are Latvia’s best tourists!”) and even our picky child was moderately pleased with the idea of an afternoon snack of pork and bread.

Fish at Doyle’s at sunset. I’ve lived in Sydney for 24 years and there’s still a raft of fundamental Sydney things I haven’t done, this was this year’s. The sunset is the key, more than the fish.

Mid-childhood. My children are nearly 9 and 13, They were never not people, but at this stage being a kid of a particular age is a less dominant part of their identity than being particularly themselves. So we have cricket that approaches a part-time job in its commitment level, 5:30am starts to get to cheerleading, sketches in advance of a future fashion label, and someone unexpectedly installing chess apps on their phone alongside TikTok.

Certainty. I haven’t had to tell anyone that their holidays or school or surgery aren’t happening or are delayed or are being replaced with some rushed together home equivalent. I skipped only one family event myself due to illness/contagiousness.

A welcome summer. It rained so much this year and has been so cool that the moderately warm summer days of late December have been quite welcome and joyful, rather than the harbringer of unpleasantness it can be in many years. Watching the sunset from Milk Beach on Christmas Day while a group danced to salsa music from someone’s phone; the morning of New Year’s Eve supervising pre-teen girls squealing in the gentle surf at Wattamolla. Beautiful.

A year of pundits being terribly wrong about the biggest of big stories. Putin won’t invade Ukraine (Atlantic Council, BBC, Al-Jazeera, University of Melbourne), China will not exit zero COVID (Time, the Atlantic, Bloomberg).

Apparently our former Prime Minister was formerly several other ministers too. The point I, and many others, return to a lot, is “but, also, why?” This is yet to be satisfactorily explained.

Two and a half metres of rain for Sydney. Someone I know lost a friend in the regional flooding.

Fatigue-and-pain hour. I had COVID at the end of January, it felt like being the last person to get it, but the seroprevalence surveys put me only in the first 40% or so of Australians. Overall I’d put it at worse than most colds, better than influenza, and certainly much better than that time I had early sepsis (which is quite the barometer for bad illness). Its tendency over about the next two weeks to show up arbitrarily once a day for about an hour at a time, fatigue-and-pain hour, was the most distinct part of it.

A plane rocketing down a runway ahead of taking off. Twelve times altogether I suppose but I most distinctly remember the first one leaving Sydney for San Francisco in March, 772 days since my previous plane flight in February of 2020.

The dim shape of chairlifts in the clouds. Our first day in Falls Creek in August was a windy whiteout — they evacuated the mountain at lunchtime — and my daughter gamely skiied down a run with me with neither of us having skied in two years. I was motivated mostly to keep the chairlift in sight for reference rather than find an easy slope and so was very proud of her fortitude.

Tech layoffs and the associated rumour mills, churn, and anxiety. It’s been especially hard since the economic boom that immediately proceeded it was very much a money-boom; during that period folks were sick, sad, and isolated, and do not have an emotional boom-time worth of resilience.

Relatedly, the mid-career exit of women I know in tech continues apace.

Sulking at a hotel window view of Falls Creek’s Summit area during the “walking is very uneasy and requires a lot of planning” phase of my MCL injury. A very winter sport moment, but I had finally found an instructor to sort out my parallel turns this time for sure, just long enough for me to catch the wrong edge ahead of the end of week bluebird days, and it was frustrating.

Both types of NSW beach road trip, that is, north and south, and both in the next four weeks. We booked the southern one, back to the same town where we holidayed in 2019, 2021, and 2022, some of my family booked in to meet us there, and then my son was invited to play in a cricket carnival about as far in the opposite direction as is possible. So, northern cricket tournament first, unpack cricket gear, wash remainder of clothes, head south.

SCUBA. The big hobby of our 20s, but early mornings and babysitters made it so unappealing in our 30s. We’re hoping to dive off Byron Bay, which I have wanted to do for at least 15 years. I don’t think going back to 20 dives a year is on the cards, but, I plan to do a handful of them.

Falls Creek re-run. 2022 was our first extended family ski trip, we stayed in a local family’s hotel full of regulars, with a communal lounge and a babysitting and dining for kids, and of such are family traditions made.

A narrative of my career that makes sense. My career isn’t bad — it’s highly paid and I get good reviews — but its current iteration is very formed by “just get through this crisis and then” where “this crisis” refers to at least four completely distinct events over three years at this point. A sustainable narrative is what I want.

North American winter, a year from now. I wanted to do this the year my son was 10, it somehow seemed like the perfect age. That year was 2020, so, we did not. I don’t dread the flights or the prices less after Europe in 2022; this may be a case of wanting to want something. But I’ve wanted to want it for a long time!

Catch up on my photography backlog. I’m almost, but not quite, three years behind. I’m not ready to give up. It’s a lot, but there’s a lot of beauty and memories in there that I want access to.

April 2022

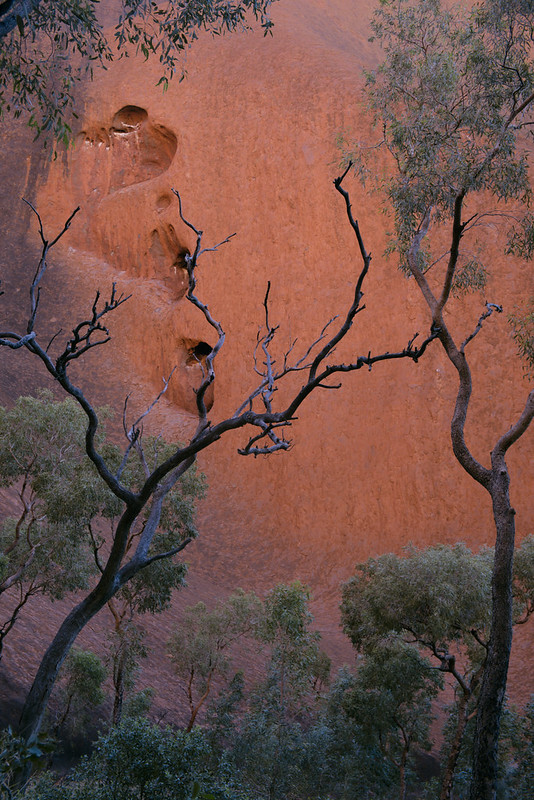

In utmost naivety, we expected the red centre to be only rock and red dirt. But the whole desert is alive with desert oak and spinnifex (andm if you are lucky, quandong), and Uluṟu in particular attracts and shelters water, and thereby, herds of ghost gum and zebra finches.

The wettest and the greenest is in the near permanent shade of the Mutijulu waterhole. People defecated on the rock when climbing was allowed; Mutijulu won’t be safe for human consumption for 20 more years.